Like all theories, game theory has its own simplifying assumptions. One of these is the rationality of players, that is: a player, like a true homo oeconomicus will maximise its payoff. As a result the outcome of a noncooperative game will be a Nash equilibrium since it consists of best responses to other players' strategies. But then how should we play the game when the opponent is not rational, especially if she has already made irrational choices?

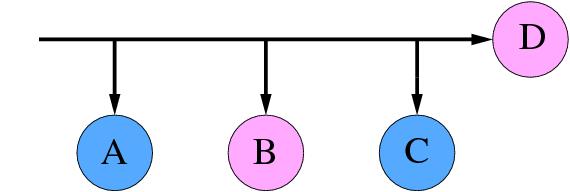

The presentation of Alexandru Baltag at LGS7 visited the topic of knowledge - rationality - belief revision. When we start a game with given, clear rules we expect the opponent to make good decisions. Take the game below: There are two players, Adam and Eve who make alternating moves. Adam starts, then Eve, etc. The game has four possible outcomes.

Adam's preferences: C > A > D > B

Eve's preferences: D > B > A > C

Ever since Selten, we solve such games using backward induction.

- First we check what happens after the last decision. According to Adam C > D, so he choses "C" and does not "continue" the game. We may therefore delete the option "D".

- Now it is Eve's move. Knowing that by continuing Adam will exit to get "C", she really chooses between "B" and "C". Since she prefers "B", she exists.

- At last we are at the first decision node. Using the same argument we find that Adam chooses to exit and get "A" since A > B.

Well... I am not sure how clear the example was. The main point is that a blatant error might lead the opponent to believe that she can finish the game with a better-than-expected result. If we are successful to confuse her, - despite the earlier error - it is us who gets all the benefit. It would be interesting to know if such tactics are ever used in e.g. chess, but the retreating strategy of 5th-10th c. nomads (Huns to Hungarians) may have seemed as bad tactics, but worked well for a long time.

Well... I am not sure how clear the example was. The main point is that a blatant error might lead the opponent to believe that she can finish the game with a better-than-expected result. If we are successful to confuse her, - despite the earlier error - it is us who gets all the benefit. It would be interesting to know if such tactics are ever used in e.g. chess, but the retreating strategy of 5th-10th c. nomads (Huns to Hungarians) may have seemed as bad tactics, but worked well for a long time.

No comments:

Post a Comment